Join the conversation about transparent and explainable AI at the TTC Summit 2022

This year on the 4th & 5th May we were joined virtually by some of the world's leading thinkers on AI to explore questions of trust, explainability and control. Together we sought answers to some of the most challenging questions around AI Accountability and how to operationalize it.

The panel discussions and talks were recorded and will be available to view here over the coming weeks. You can sign-up for our newsletter to be notified of when these videos drop. We'll also be publishing a blog post summarized high-level insights from the Summit next week, so stay in touch!

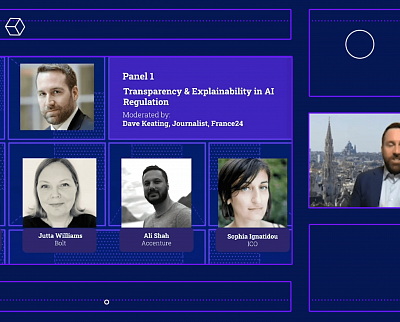

Panel 1

Transparency & Explainability in AI Regulation

Moderated by:

Dave Keating, Journalist, France24

Panel 2

AI & Society: Demonstrating Accountability

Moderated by:

Dr. Christine Custis, Partnership on AI

Panel 3

IMDA'S Partnership with Meta on AI Explainability

Panel 4

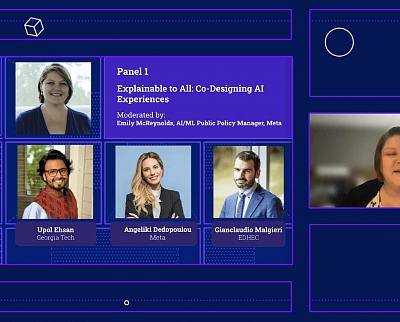

Explainable to All: Co-Designing AI Experiences

Moderated by:

Emily McReynolds, AI/MI, Public Policy Manager, Meta

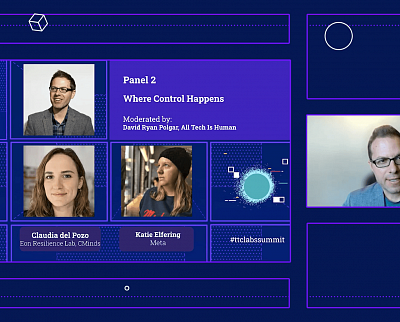

Panel 5

Where Control Happens in AI

Moderated by:

David Ryan Polgar, All Tech is Human

LT 1

Lighting Talk 1

Luke Vilain, Lloyds Bank

LT 2

Lighting Talk 2

Shubham Negi, Betterhalf

LT 3

Lighting Talk 3

Chavez Procope, Meta - AI System Cards

LT 4

Lighting Talk 4

Patrick Gage Kelley, Google - Discover AI in Daily Life

LT 5

Lighting Talk 5

Jenny Romano & Pedro Henriques, Newsroom

Our Speakers

Thank you to all of our amazing speakers who joined us on the 4th and 5th May 2022!

Dr. Christine Custis

Head of Fairness, Transparency, Accountability and Safety at the Partnership on AI

Dr. Christine Custis is the Head of Fairness, Transparency, Accountability and Safety at the Partnership on AI where her work focuses on ABOUT ML (Annotation and Benchmarking on Understanding and Transparency of Machine learning Lifecycles). This initiative aims to bring together a diverse range of perspectives to develop, test, and implement machine learning system documentation practices at scale.

Rob Sherman

Vice President & Deputy Chief Privacy Officer for Policy, Meta

Rob Sherman is Vice President and Deputy Chief Privacy Officer for Policy at Meta. Since joining the company, then called Facebook, in 2014, Rob and his team have worked closely with privacy and policy experts inside and outside the company, along with product, engineering, research, and other teams, to protect people’s privacy across the company’s suite of products and technology. Prior to joining the company, Rob advised technology and media companies as a lawyer at Covington & Burling LLP. Rob lives with his wife and two daughters outside Washington, D.C.

Meeri Haataja

CEO & Co-founder at Saidot, Affiliate, Berkman Klein Center for Internet & Society

Meeri Haataja is the CEO and Co-founder of Saidot, a platform for AI governance and transparency used by enterprises and technology providers to apply systematic AI governance and communicate transparently about their AI. Saidot is on a mission to enable responsible AI ecosystems. Before starting her own company, Meeri led AI strategy and GDPR implementation in OP Financial Group, the largest financial services company in Finland.

Wan Sie Lee

Director, AI and Data Innovation, IMDA

At Info-Communications Media Development Authority (IMDA), Wan Sie Lee is Director of Artificial Intelligence (AI) and Data Innovation. She focuses on working with industry and government partners to enable data-driven innovation in Singapore, in order to anchor Singapore as a data hub and support economic growth. In addition, she responsible for driving AI governance and growing a trusted AI eco-system in Singapore. Wan Sie has extensive experience in technology use in the public sector, having developed Singapore’s long-term national ICT and digital economy strategies and implemented large, whole-of-government digital services. She has also provided advice on digitalisation to other governments in the world.

Professor Barry O'Sullivan

Founding Director, Insight Centre for Data Analytics, UCC

Professor Barry O'Sullivan, FAAAI, FEurAI, FIAE, FICS, MRIA, is an award-winning academic working in the fields of artificial intelligence, constraint programming, operations research, AI/data ethics, and public policy at University College Cork. He frequently contributes to global Track II AI and cyber-related diplomacy efforts and is a co-founder of Stimul.ai and the SFI Centre for Research Training in AI.

Ali Shah

Global Principal Director, Responsible AI

Ali leads Accenture's efforts in making sure AI is a force for good, working in the interests of citizens and society. Ali's expertise in AI, digital transformation, policy, regulation, data ethics, privacy, and human rights, helps ensure Accenture clients can understand and practically address the challenges AI and other emerging technologies present. Ali is passionate about making being responsible simple.

Prior to joining Accenture Ali was Head of Technology Policy at the UK Information Commissioner's Office where he was accountable for regulatory policy related to emerging technologies. His leadership and expertise focused on AI, privacy engineering, cross regulatory investigations, and award-winning work helping designers and engineers re-engineer the internet with children's safety at the core. Ali has also held roles at the BBC, related to emerging technology strategy, architecture, and engineering

Claudia del Pozo

Executive Director, Eon Resilience Lab, CMinds

Claudia Del Pozo is the Executive Director of the Eon Resilience Lab of C Minds, a Mexican innovation agency that promotes the ethical and responsible use of new technologies for good in Latin America. She is currently coordinating the world's very first public policy prototype for transparent and explainable AI systems in Mexico and is supporting Mexico’s post-COVID recovery by exploring the potential role of responsible AI for this purpose in a variety of initiatives and how emerging risks can be mitigated.

In the past, Claudia coordinated and co-authored Mexico's National AI Agenda from the IA2030Mx Coalition and participated in the creation of Mexico’s bases for AI strategy, placing Mexico among the 10 first countries to have an AI strategy. She has co-authored various reports and policy recommendations to promote digitalization and the ethical use of data and AI in Latin America, a book, and is a TEDx speaker.

Luis Aranda

AI Policy Analyst, OECD

Luis Aranda is an Artificial Intelligence policy analyst at the OECD, which he joined in 2017. Before joining the OECD, he worked as a Strategist and Business Program Manager at Microsoft, where he conducted executive-level strategy, operations, and end-to-end accelerated-growth projects. Luis has also worked as an engineer for Grupo Bimbo and the United Nations. His work and research interests lie at the intersection between technology, social inclusion and policy. He holds a Bachelor’s degree in engineering, a Master’s in applied math and a PhD in economics.

Chavez Procope

Product Designer, AI Research, Meta

Chavez Procope, originally from St.Kitts, is a Product Designer with 7 years of experience, currently working alongside Meta’s Responsible AI team. His focus is external AI documentation with the goal of helping people understand how and why AI at Meta performs the way they do. He’s the lead designer for Meta’s System Cards. In his free time he’s typically spending time with his wife and daughter or binging Netflix.

Sophia Ignatidou

Principal Policy Adviser, AI, UK Information Commissioner's Office (ICO)

Sophia Ignatidou is a Group Manager for Technology Policy at the ICO. She has been leading on AI policy, advising domestic and international stakeholders, representing the ICO at the Global Privacy Assembly, the Algorithmic Processing workstream of the Digital Regulation Cooperation Forum and the AI Committee of the BSI. Prior to joining the ICO Sophia was an Academy Fellow at the Royal Institute of International Affairs (Chatham House) researching AI and disinformation.

Gianclaudio Malgieri

Associate Professor of Law and Technology, EDHEC

Gianclaudio Malgieri is an Associate Professor of Law and Technology at the EDHEC Business School in Lille (France), where he conducts research at the Augmented Law Institute. He is Co-Director of the Brussels Privacy Hub and conducts research on and teaches Data Protection Law, AI regulation, Consumer protection in the digital market, and Data Sustainability. He has authored more than 60 publications, including articles in leading international academic reviews.

Upol Ehsan

Human-Centered Explainable AI Researcher, Georgia Tech

Upol Ehsan is a doctoral candidate at Georgia Tech and an affiliate at the Data & Society Research Institute. Combining his expertise in AI and Philosophy, his work in Explainable AI (XAI) has coined the term Human-centered Explainable AI (a sub-field of XAI) to foster a future where anyone, regardless of their background, can use AI-powered technology with dignity. His work has received multiple awards in top-tier academic venues like CHI and been covered in major media outlets. By promoting equity in AI, he wants to ensure stakeholders who aren’t at the table do not end up on the menu.

Twitter: @upolehsan

Linkedin: https://www.linkedin.com/in/uehsan

ResearchGate: https://www.researchgate.net/profile/Upol-Ehsan

Jutta Williams

Head of Privacy, Bolt

Jutta Williams is Head of Privacy at Bolt, a one-click, cross-marketplace commerce platform. Jutta works across all engineering and platform teams to implement privacy best practices. She was recruited to Silicon Valley in 2015 by Google where she led data protection for their Medical AI research team (later publicly launched as Google Health) and was a senior member of the technical staff at Facebook's Central Privacy and AI Infra org. She was the inaugural Chairperson and Head of the US delegation to ISO for AI Standards.

David Ryan Polgar

Founder & Director, All Tech is Human

David Ryan Polgar is the founder and director of All Tech Is Human, a non-profit committed to uniting a broad range of stakeholders to co-create a better tech future, along with being an international speaker and frequent media commentator. He currently sits on TikTok's Content Advisory Council.

Lee Gang

Head of Data Science, X0PA

Lee Gang leads the data science team at X0PA AI, a recruitment software company that aims to maximize objectivity in hiring. He is experienced in utilizing data science technology and tools to deliver impactful business outcomes and believes in proper governance for quality data science. He is also experienced in the HR and healthcare domains. He currently also leads the AI & Data Governance initiatives in X0PA AI.

Finale Doshi-Velez

Assoc. Professor, Harvard Paulson School of Engineering

Finale Doshi-Velez is a John L. Loeb associate professor in Computer Science at the Harvard Paulson School of Engineering and Applied Sciences. She completed her MSc from the University of Cambridge as a Marshall Scholar, her PhD from MIT, and her postdoc at Harvard Medical School. Doshi-Velez uses big data for medical applications, including diagnosis of disease. She earned her master's degree in 2007 from MIT, and joined Harvard University in 2014.

Kolja Verhage

Digital Ethics Manager, Deloitte

Kolja Verhage is an expert on Digital Ethics, Technology Policy and AI Governance, from a Dutch, European and US perspective. As manager of the Digital Ethics team at Deloitte, Kolja is working with a wide range of multinational corporations and government agencies to help build digital solutions that are aligned with human values, respect the rule of law and support society. Currently, he is active in the education sector and financial services industry to build policy frameworks that guide the development of digital solutions to simultaneously empower the organization's core-mission and respect fundamental human rights.

Luke Vilain

Data Ethics Senior Manager

Lloyds Bank

Luke works in data ethics at Lloyds Banking Group, designing processes & tooling for detailed application of explainability & fairness within machine learning systems

Angeliki Dedopoulou

Public Policy Manager, AI & Fintech, Meta

Angeliki Dedopoulou is a Public Policy Manager at Meta. Before joining Meta's EU Public Affairs team, she was a Senior Manager of EU Public Affairs at Huawei, responsible for the policy area of Artificial Intelligence, Blockchain, Digital Skills and Green-related policy topics. Ms Dedopoulou is a Member of the Board of the Hellenic Blockchain Hub and member of the AI4EU working groups on Ethical AI. She studied Political Science and History in Greece, Sociology in France and European Governance in Luxembourg.

Aden Rolfe

Creative Lead Content Strategy

CraigWalker

Aden Rolfe heads up content strategy for Craig Walker, an Asia-Pacific design and research studio. With a background in copywriting and editing, he focuses on integrated approaches to communication, using content and design to unpack problems and articulate ideas.

Richard de Vries

UX Designer at Philips

Richard de Vries is an award-winning UX Designer at Philips. Over 20 years ago Richard created his first website, and since then he has continuously used his creativity to design for a better world. This journey has brought him from designing the first internet banking interfaces to designing user experiences, services and business models for medical devices today. At Philips Design Richard has worked on several projects both on the B2B and B2C side of the business. For B2B Richard worked together with the design team in Cambridge Massachusetts on the design of the B2B e-commerce journey.

Dhivya Kanagasingam

Post-Graduate Candidate

LKY School of Public Policy

Dhivya is a gender and human rights specialist currently completing a Master’s in Public Policy degree at the Lee Kuan Yew School of Public Policy. Dhivya partnered with Meta, TCC Labs and Open Loop for a final year group capstone project “Resolving AI Governance Challenges through Policy Prototyping” where she along with fellow team members contributed learnings towards maximizing the efficacy of policy prototyping.

Adam Bargroff

Privacy Policy Manager, TTC Labs at Meta

Adam Bargroff, PhD, is a Privacy and Public Policy Manager at Meta & TTC Labs. He previously worked in academia and non-profits to build education partnerships for digital and 21st century skills development. He also spent time at the European Commission and Dublin City Council on innovation aspects of the digital agenda.

Jenny Romano

Co-Founder & CEO, The Newsroom

Jenny Romano is Co-Founder and CEO at The Newsroom, born to fight misinformation and promote plurality online. Founded in December 2020, The Newsroom developed an Explainable AI-powered solution that assesses the trustworthiness of online news articles, and helps users understand the wider context behind the news. Before her journey at The Newsroom, Jenny worked in Digital Sales at Google, where she managed some of the largest clients in Southern Europe and mentored startups focused on UN SDGs across Africa and Europe.

Pedro Henriques

Co-Founder and Head of Product, The Newsroom

Pedro Henriques is Co-Founder and Head of Product at The Newsroom, on a mission to fight misinformation and promote plurality online. Since the company’s foundation, Pedro has been leading the development of The Newsroom’s Explainable AI-powered solution that assesses the trustworthiness of online news, and frames it in context. Before his entrepreneurial journey, Pedro led a Data Science team at LinkedIn, where he researched how users interact, connect and share content on one of the world’s largest social media platforms.

Shubham Negi

Lead UX Designer, Betterhalf

Shubham Negi is leading the UI/UX design team of Betterhalf, and is passionate about creating a better experience for all users. Betterhalf is revolutionising the marriage space in India by creating a digital space for finding and connecting with potential matches in the Betterhalf mobile app. Shubham's previous work includes working as a UI/UX designer at Remedico, an online dermatology service for Gen-Zs.

Emily McReynolds

AI/ML Public Policy Manager, Meta

As part of Meta’s AI Policy team, Emily leads research engagement on responsible AI with academia and civil society. At Meta she has co-authored AI/ML explainability documentation projects including System Cards and Method Cards. Before joining Meta she enabled ethical research and responsible innovation through end-to-end data strategy for HCI, ML, and AI at Microsoft Research. Her commitment to the U.S. pacific northwest and the University of Washington comes from her years as the program director for the University of Washington’s Tech Policy Lab, an interdisciplinary collaboration across the CS, Information, and Law schools, where she co-led projects on augmented reality, driverless cars, and Toys That Listen.

Wednesday 4th May 2022

Welcome & Opening Remarks

10:00 ET / 15:00 BST / 16:00 CEST

AI Transparency & Explainability in Regulation

In this panel discussion we will explore approaches to AI Transparency and Explainability as they are expressed in current regulation both in Europe and North America, specifically the EU AI Act and the Algorithmic Accountability Act. What are the overarching Transparency & Explainability requirements we’re seeing in AI legislation? What are the goals and principles, and are there any gaps we should be looking to address through design?

10:20 ET / 15:20 BST / 16:20 CEST

AI & Society: Demonstrating Accountability

What does it mean for AI systems to be “accountable” to society, and how can we implement accountability in practice through novel tools and processes in a way which provides real insight for both experts and non-expert audiences?

11:00 ET / 16:00 BST / 17:00 CEST

Lightning Talks: Implementing Explainability in UX/UI

A selection of Lightning Talks which showcase interesting approaches to AI Trustworthiness and UX Design from both industry leaders and startups.

11:40 ET / 16:40 BST / 17:40 CEST

Preview of Day 2 & Closing Remarks

Thursday 5th May 2022

Lessons in Explainability from Startups

Meta partnered with the Infocomm Media Development Authority (IMDA) and worked with selected AI-powered startups from around the world on a product and policy prototyping initiative which aimed to test design-led approaches to AI Explainability. We are launching a report based on this project, “People-Centric Approaches to AI Explainability”, which tests product and policy guidance to provide a series of practical insights, considerations and observations derived from co-created explainability prototypes

17:00 SGT / 10:00 BST / 11:00 CEST

Welcome & Recap of Day 1

A quick recap of what we learned in Day 1 and scene-setting for Day 2!

10:00 ET / 15:00 BST / 16:00 CEST

Explainable to AIl: Co-Designing AI Experiences

How can we ensure that we are creating AI experiences which are transparent and explainable to everyone using digital services? What are the key principles of designing these experiences, and how do we think about Explainable AI in the broader context of digital literacy and education? Can and should we involve end users in these design decisions, and if so, how can we facilitate their involvement?

10:10 ET / 15:10 BST / 16:10 CEST

Where Control Happens

An exploration of where in the digital experience a user can exert control over the data used as inputs, feedback into the processes and choice of outputs of AI systems. Can controls and feedback loops be used to empower users, and to improve their digital literacy and understanding of AI?

Is more control always better, or should there be limits to an end user's control over AI?

10:50 ET / 15:50 BST / 16:50 CEST

Lightning Talks: Implementing Explainability in UX/UI

A selection of Lightning Talks which showcase interesting approaches to AI Trustworthiness and UX Design from both industry leaders and startups.

11:30 ET / 16:30 BST / 17:30 CEST

Back to top

Back to top